|

Trying to understand TensorFlow, Nengo, and NengoDL all at the same time will make things very confusing since there are multiple concepts and ways of doing things.

Once again, I recommend starting with TensorFlow and getting a model working there first. a tf.keras.Input layer), and then you can specify the Numpy arrays as inputs when you run the simulation (see here). to gap length is its advantage over other RNNs, hidden Markov models and other sequence. If you are using NengoDL, then you’ll need to define an input layer (i.e. Convolutional neural networks get their name from a mathematical. If you are using regular Nengo, then you can use a nengo.Node to feed those Numpy arrays to your network: x_node = nengo.Node(X) # Make a Nengo node to just output the X array Do you want to use the arrays as inputs to your TensorFlow network?Īs for converting the Numpy arrays into Nengo methods, there are multiple ways of doing, each dependent on what Nengo product you are using. As for converting the Numpy arrays to Tensors, I’m not sure what you mean by that. That is assuming you want to use the X array as inputs to generate model predictions? If you are, then just replace X_test with X. If you want to use those Numpy arrays as inputs to your TensorFlow network, it seems like you are already doing that, with the code: prediction = model.predict(X_test) Once again, I’m not entirely sure what you are attempting to achieve here, so you are going to have to bear with me. How to convert these inputs to nengo method or tensors? With nengo.Simulator() as sim:īut got 0’s as output like this, Ĭould you please suggest on what went wrong? Probe_layer = nengo.Probe(_output_at(-1)])

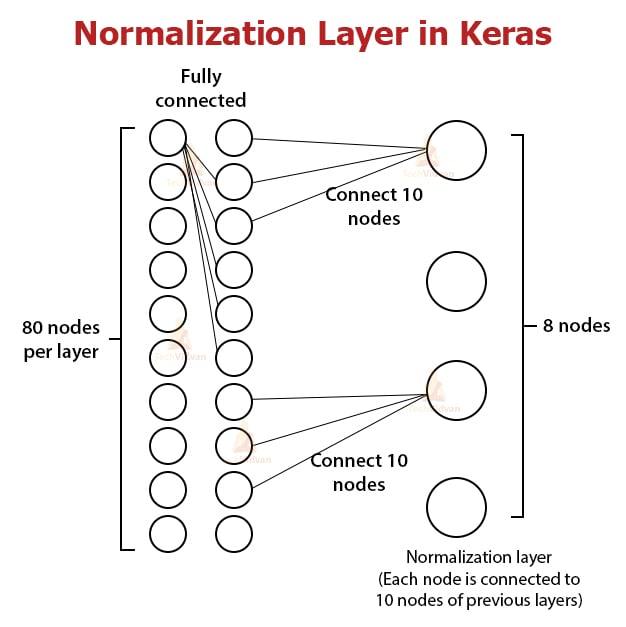

With nengo_dl.Simulator(, seed=0, minibatch_size=miniBatch) as sim:Ĭonverter.outputs: tf.losses.MeanSquaredError() Model = tf.keras.Model(inputs=inp, outputs=dense1)ĭef train(params_file="./keras_to_loihi_params123", epochs=1, **kwargs): model = modelDef()Ĭonverter = nengo_dl.Converter(model, **kwargs) # it will be converted to a `nengo.Node` and run off-chipĭense1 = tf.(units=num_classes, name="dense1")(dense0) # since this final output layer has no activation function, To_spikes_layer = tf.1D(įlatten = tf.(name="flatten")(to_spikes)ĭense0_layer = tf.(units=10, activation=tf.nn.relu, name="dense0") Def modelDef(): inp = tf.keras.Input(shape=(20), name="input")

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed